Designing Reliable ETL Workflows for High-Stakes Reporting

Table of Contents

Introduction

A single inconsistency in a boardroom report can undo months of trust between your data team and the C-suite. More often than not, this is due to a faulty link in your ETL pipeline. In today’s data-driven landscape, businesses are increasingly relying on custom software development services to build scalable and reliable data workflows. The value of your data is ultimately determined by how smooth and efficient your data processes are.

The Foundation of Modern Data Architecture

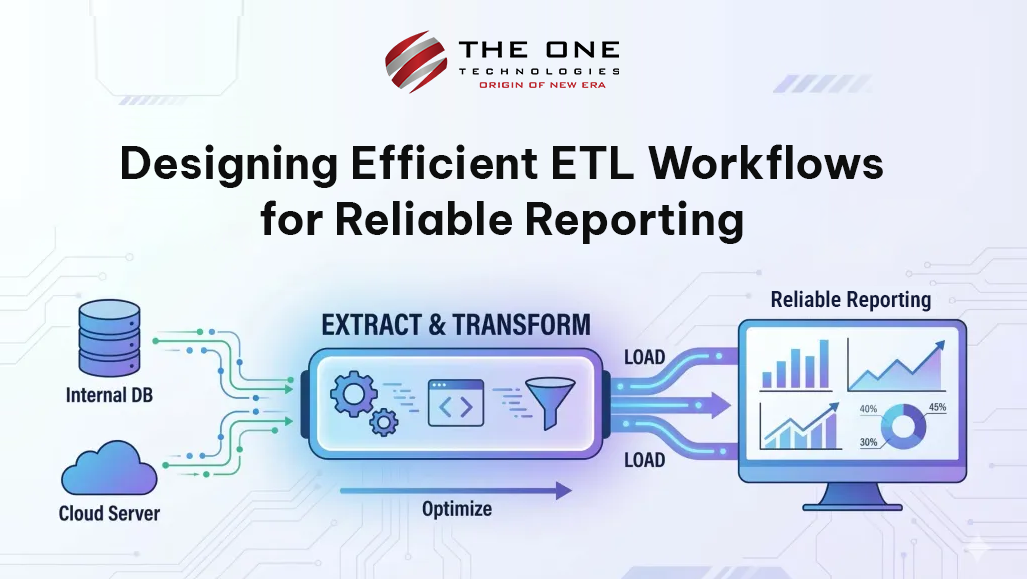

Essentially, an ETL process is a bridge between operational databases and analytical systems where reporting takes place. The extraction process involves retrieving data from multiple sources, such as CRM systems, ERP systems, and third-party APIs. The transformation process is where the real work takes place, where data is cleansed, filtered, and even aggregated to suit business needs. The loading process is where data is loaded into a data warehouse or data lake.

Although it sounds easy, it is in the implementation that separates agile reporting from archaic reporting. Thinking about efficiency involves moving beyond data movement and thinking about a strategic approach to architecture.

Prioritizing Scalability Through Incremental Loading

The biggest trap to avoid when designing the ETL process is to rely on using full refreshes. As the amount of data continues to grow, retrieving the entire dataset every time the user refreshes a report will become unwieldy. It will take longer and become more costly to compute.

In order to ensure that the process remains efficient, it will become necessary to implement patterns that incorporate incremental refresh. This means that instead of retrieving all the data, only the new information will be retrieved. This ensures that the reporting environment remains as close to real-time as possible.

Transformation and Data Quality

The transformation layer is where your business logic exists. Good ETL best practice dictates that your business logic should be modular and idempotent. If something goes wrong in the middle of your workflow, it should be able to pick up again safely without duplicating data or corrupting the results. Quality checks for the data should be built into the workflow from the start, not as an afterthought.

By passing your data through automated validation rules like "no nulls in primary keys" or "keep currency formats consistent," you avoid the "Garbage In, Garbage Out" pitfall. When your stakeholders can trust that the information they're viewing in their dashboard has been run through a gauntlet of automated quality checks, the quality of your reporting leaps forward.

Balancing Latency and Resource Optimization

In some cases, efficiency depends on finding the right balance between speed and cost, where cost is related to the level of urgency required for accessing the information and the computing power you are willing to commit. In some cases, such as daily financial summaries, running the query at midnight might be very efficient.

However, for operational information, micro-batch or stream-based processing might be required. A good ETL process prioritizes the sorting of information based on its need for timeliness. Offloading computationally expensive, non-critical transformations to off-peak hours, for instance, can improve efficiency by keeping the data warehouse available for end-users who are using the database for complex queries during business hours.

Monitoring and Observability in the Pipeline

A silent failure of an ETL pipeline is a nightmare when it comes to trusting your data. It’s possible to be running a report that looks perfectly healthy on the surface, but is secretly missing the last three hours of sales data due to an API timeout. Effective workflows require effective observability.

This is more than just sending pass/fail messages when things go wrong. It’s understanding the lineage of the data, monitoring row counts, and measuring the duration of the processing steps. Having complete visibility into every step of the pipeline means you can address issues before they become problems, especially before the CEO sees it on the morning dashboard. Good reporting starts with a pipeline that will tell you when it’s not working well.

The Conclusion

The art of building an efficient ETL process is an ongoing process, grounded in both technology and business objectives. The idea is to strive for small, incremental improvements, tighten up your data, and optimize your resources to transform a process that must be done into a process that gives you an advantage.

When your ETL process is smooth, your reporting becomes a driving force for your business. The result is an unencumbered flow of information that allows every department to move forward with confidence, informed by information that is not only accurate but also timely.

Are you interested in transforming your current data infrastructure into a high-performance growth engine? Well, you are at the right place. The One Technologies invites you to get in touch with our team of expert data engineers to design scalable and reliable ETL pipelines to meet your business needs.

About Author

Kiran Beladiya is the co-founder of The One Technologies. He plays a key role in managing the entire project lifecycle, from discussing ideas with clients to overseeing successful releases. Deeply passionate about technology and creativity, he is also an avid writer who continues to nurture and refine his writing skills despite a demanding schedule. Through his work and writing, Kiran Beladiya shares practical insights drawn from real-world experience.

_639102017892017197.png)